Markov model state topology Link to heading

The second piece of coursework for my speech & audio processing & recognition module was focused on the machine learning side of the field. One of the main methods we learnt about were Hidden Markov Models and how to train them, this coursework was a test of the theory. My submission achieved 98%.

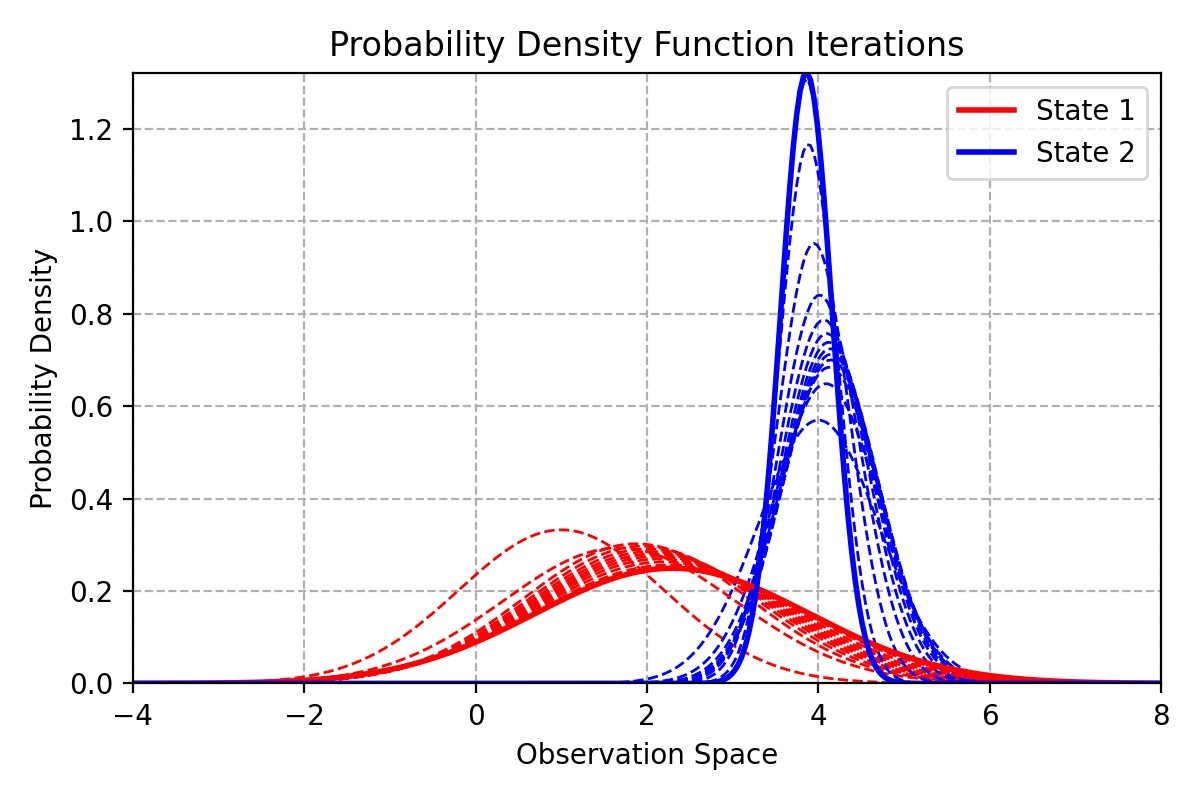

Re-estimated probability density function outputs after training Link to heading

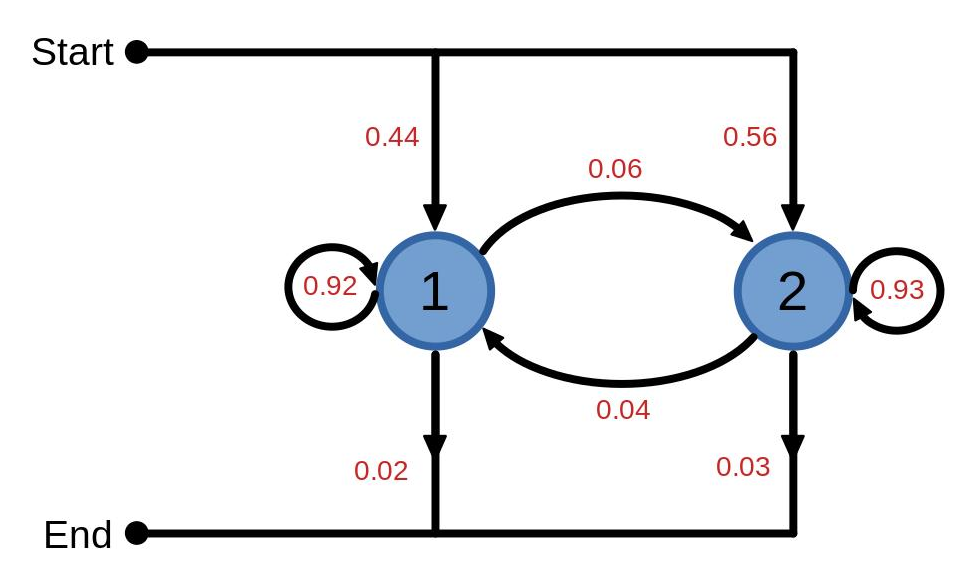

The provided spec for the model included the entry, exit and transition probabilities, the parameters for each state’s Gaussian output function and the observations used for training.

From here, the coursework tested the ability to calculate and analyse various aspects of the model including forward, backward, occupation and transition likelihoods. A single iteration of Baum-Welch-based training was completed resulting in a new set of transition probabilities and output function parameters.

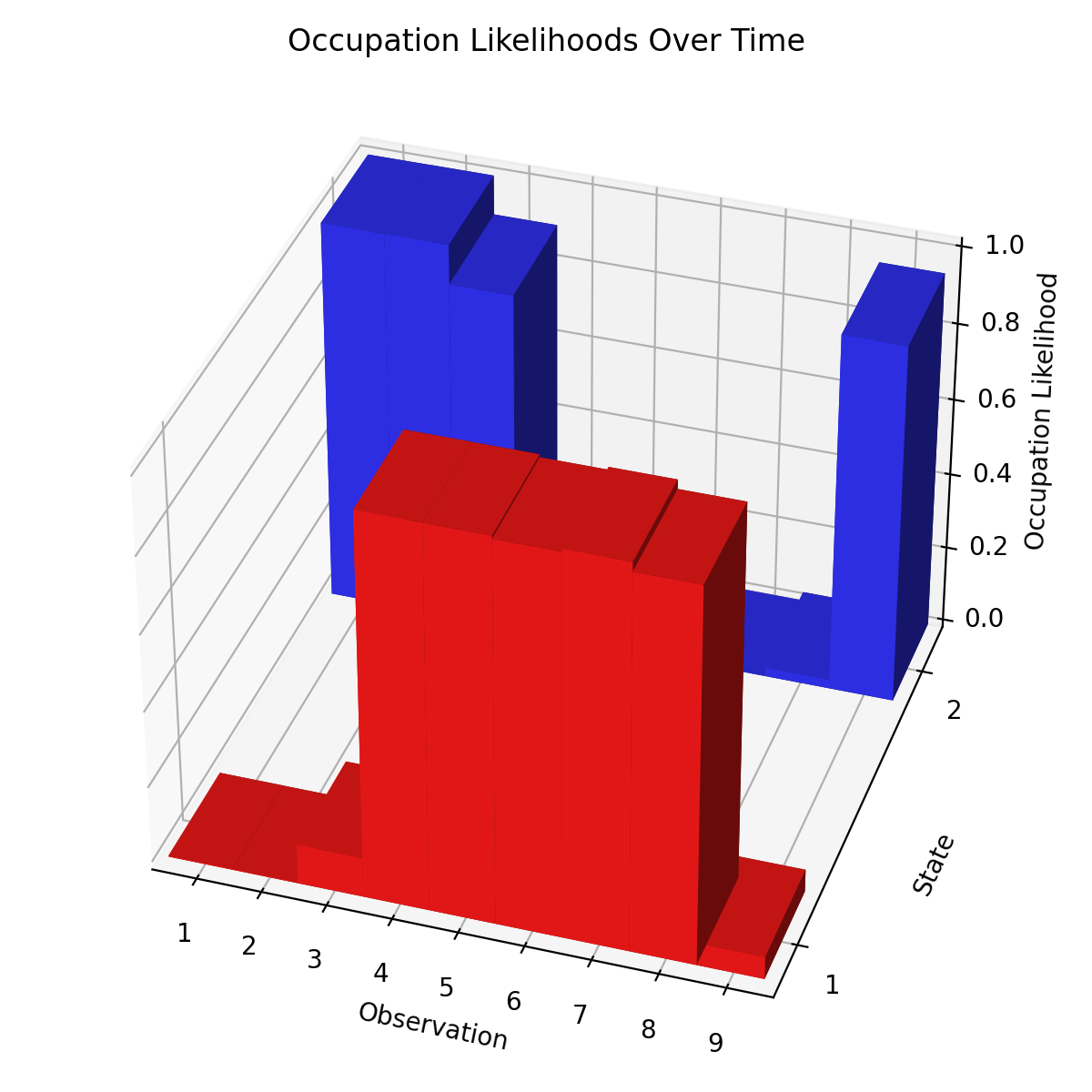

Probability of being in each state at each time step or observation Link to heading

The above graph is presenting the occupation likelihoods of each state at each time step or observation. It is the joint probability from the forward and backward likelihoods. From here it looks like the observations were taken from state 2 for 3 time-steps before swapping to state 1 for 4 time-steps and changing back to state 2 for the last one.